Methodology for Emotional Analysis of Video Game Footage

by Ziad Abdelkarim*, Aurel CozaEmail: zabdelka@asu.edu

Received: 05 Jan 2021 / Published: 22 Dec 2021

Abstract

Video games are a ubiquitous form of entertainment and recently they have been adopted as a form of competition similar to traditional sporting events. It is generally agreed that video game players (both casual and professional) experience an elevated cognitive and emotional load while playing. However, little is known about the actual emotional and cognitive impact of said games. We propose here a novel approach to quantifying in-game emotional state using publicly available data (game streaming). A practical application of this method is presented in the form of a specific game analysis. The findings of this study suggest that emotional expression is directly linked to game dynamics and performance. Further studies are suggested that could build on this methodology and investigate the link between emotions, decision making, and other behavioral traits.

Introduction

Video games in general and Esports, in particular, have been one of the fastest-growing recreational/competitive activities in recent years (Perrin, 2018) and according to some estimates they are on track to overtake sporting events from a viewership and participation perspective in the coming years (Candela & Jakee, 2018). From an economic perspective, the video game industry (hardware, software, and entertainment) has long since overtaken segments of the traditional sports industry with revenues alone projected to reach over $1.6 billion this year (Macey, 2019). While the reach and socio-economic impact of video games and esports have seen steady growth over the last two decades, the controversy surrounding these topics has been growing at a similar rate. Thus, some regard video gaming as a distraction from traditional sports participation (Heiden et. al, 2019), and others see them as the ultimate manifestation of sport democratization (Thiel, 2019). With the recent proposals to make esports an official competition for future ‘traditional’ sporting events such as the Olympic Games (Schaffhauser, 2019), it is imperative to understand various performance aspects related to esports. Controversy aside, a phenomenon of this magnitude requires the attention of the scientific community, or else it becomes the topic of pseudoscientific discussions and/or a matter of opinion with little to no scientific basis. However, despite the ubiquity of video games and the discussions surrounding this topic, very little is known about the impact of esports on players’ behavior/s and emotion/s. A first step in answering these questions would be to directly quantify the emotional response during participation. This would allow the scientific community to research the impact of emotions on game performance and vice versa to eventually create training guidelines for performance and health.

While there are quite a few particularities of esports that make them unique and appealing, one stands out the most: the continuous emotional engagement that a player or viewer is subjected to while playing or being immersed in watching a game. While a large number of studies have focused on the impact of video games on limbs, eye strain, posture (Naser & Al-Bayed, 2016) or addressing philosophical questions related to the ethics of video games (McCormick, 2001), little is known about the effects of the emotional strain on: (a) game performance; (b) the health and wellness of the players, and (c) the mechanisms responsible for the high desirability of esports. There is however, a growing body of evidence suggesting that games are critical for healthy emotional development and that games in general and video-games, in particular, represent an environment to strengthen a range of cognitive skills such as spatial navigation, reasoning, memory, and perception (Bowen, 2014). Yet, to the best of our knowledge, there is very little information out there about the actual (quantifiable) magnitude of the emotional load exerted upon video game players.

Methodology

In this context, we would like to focus our attention on creating the tools needed to quantify the emotional expression in the condition of a real-world video game playing performance. Thus, we propose here a new methodology that makes use of publicly available video recordings from Twitch, a video game live streaming platform, where both the player’s emotional expression and the game performance can be monitored simultaneously. All done so lawfully under the implications of fair use.

Emotional recognition as measured from the facial emotional expression can be quantified using the video recordings with the aid of one of the many available open-source or commercial emotional recognition sensing solutions. Emotional, mood, and stress data can then be used as stand-alone data (as a time series) or correlatively in combination with various measures of performance (available from the game).

For this study we have opted for a commercially available facial emotional expression quantification solution: AFFDEX from Affectiva (McDuff, et al. 2016). The AFFDEX system for automated facial coding uses four main components from which emotional expressions (anger, contempt, disgust, fear, joy, attention, surprise, engagement, sadness) are derived: 1) face and facial landmark detection 2) face texture feature extraction, 3) facial action classification and 4) emotion expression modelling (McDuff, et al. 2016). Landmark detection is then applied to each facial bounding box and 34 identified landmarks (e.g.: brow furrow, nose wrinkle, lip raise, lip depress etc.). If the confidence of the landmark detection is below a threshold then the bounding box is ignored. The facial landmarks, head pose and intraocular distance for each face are exposed in the AFFDEX (McDuff, et al. 2016) as a visualization and overlay. The emotional expressions are dimensionless and are described by a given score from 0 (absent) to 100 (present). Although this software is not entirely refined, further accuracy is achieved by its maintainers through annotation of large video sets through metadata from self-reports, and neuroscientific measures from EEGs. These are electrical activity recorders of the brain to specify the emotional content of records and to accelerate the progress of affective computing research by providing more comprehensive benchmarks for training and testing of spontaneous expressions (Dupré et al., 2020).

The platform used to integrate the AFFDEX SDK (software development kit) was iMotions 8.1 (IMotions Biosensor Software Platform - Unpack Human Behavior, n.d.) which offers an easy to use video segmentation, analysis, and data exporting interface.

Subjects and Data Collection

We strictly set our sights on the popular game Rocket League for use in the study and we are using the open-source application, Twitch Leecher, to locally download these videos. The inset showing the face of the players was isolated by the AFFDEX software within iMotions and utilized to derive the emotional expression of the players throughout the competition. This process can entirely be replicated using live screen captures of gameplay and within controlled environments for increasingly accurate results.

The subjects were selected at random from the top 3 pages of the Rocket League streamers list on Twitch during the summer of 2020. They were chosen for the quality of their stream, broadcast length, and for having a relatively large and clear screen recording of their face within the overlay. A total number of 7 subjects were chosen for this study with 4 being male and 3 being female. Individual games were then precisely annotated from start to finish within the recordings, another added functionality of the iMotions software. Games were classified as starting upon the screen displaying “GO!” to when the game clock hit zero, keeping track of both teams’ score at the end. The exported file from iMotions was then condensed using Python libraries NumPy and pandas to average out the timeline of each emotional expression (7 channels: Attention, Engagement, Anger, Surprise, Fear, Contempt, Sadness) over each game and normalized between 0 and 1. Followed by further manipulation of the data for insightful visualizations.

Data

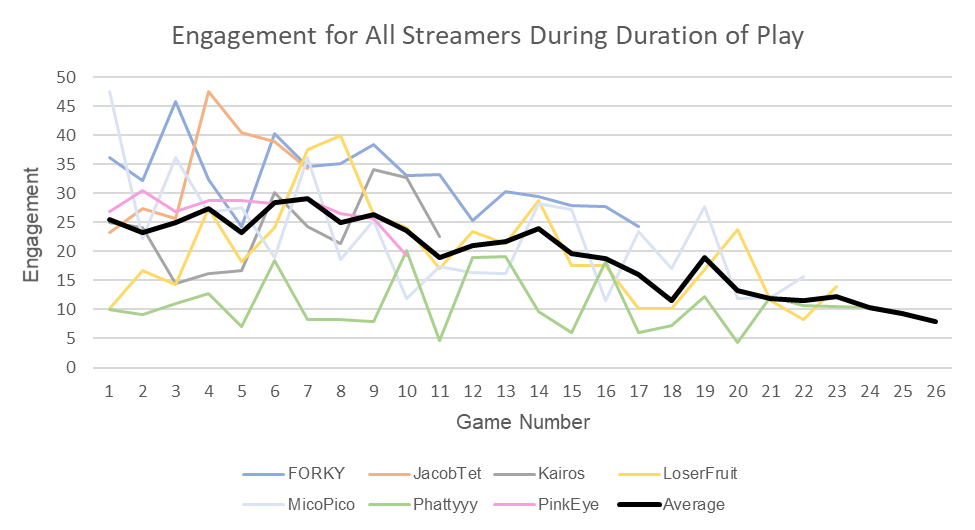

Figure 1. Shows average engagement levels for all players from game to game along with an average trendline. Because the Rocket League streams were of varying length, the number of games played varied from player to player.

The Affectiva SDK defines engagement as a measure of facial muscle activation that illustrates the subject’s expressiveness (Affectiva, 2017). On average the engagement decreased by 1.51% each game across all players for the duration of their gameplay shown by the black average line.

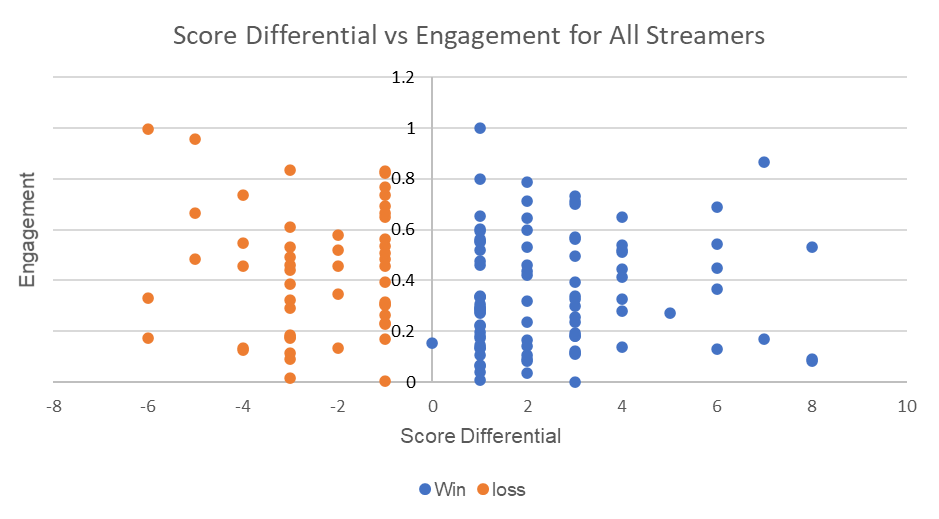

Figure 2. A scatter plot displaying the correlation between the streamers’ score differential and average engagement per game.

The -1 to 1 score differential interval contains 39.4% of all games played for all streamers. The point plotted on 0 as a win was the result of a forfeit credited to the streamer.

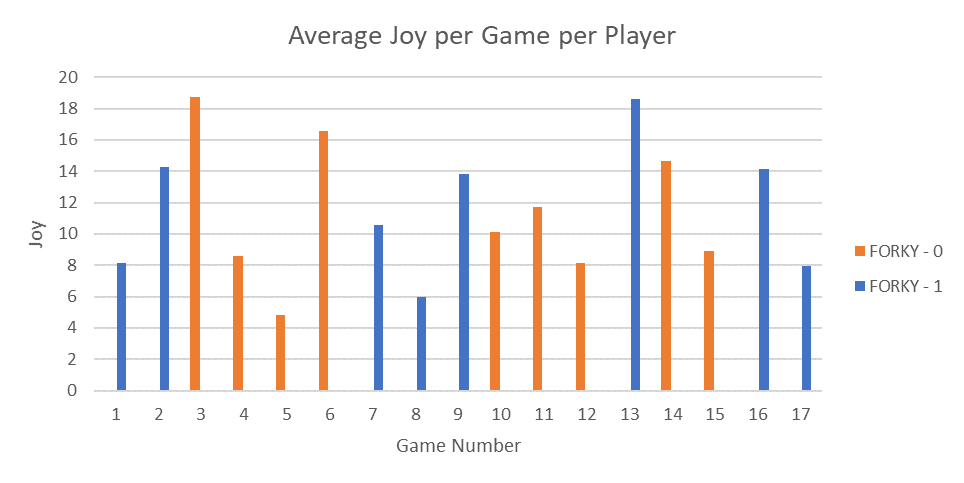

Figure 3. A bar chart displaying the average joy for each game played by FORKY with 0 indicating a loss and 1 indicating a win for the game.

FORKY is displayed for showing profound intervals of winning and losing in succession. As he initially begins wining, joy increases, until a sudden spike in joy after 4 consecutive losses. Another spike of joy is observed at game 6 where another 3-game win interval is observed. Another 3-game losing interval ensues leading to a spike in joy for a single game, followed by 2 losses which lower joy. Then a win which brings joy back up then lowering for the next game.

Although this interval behavior was seen within all subjects, this pattern of ‘joy’ value fluctuations was not as obvious for all the subjects.

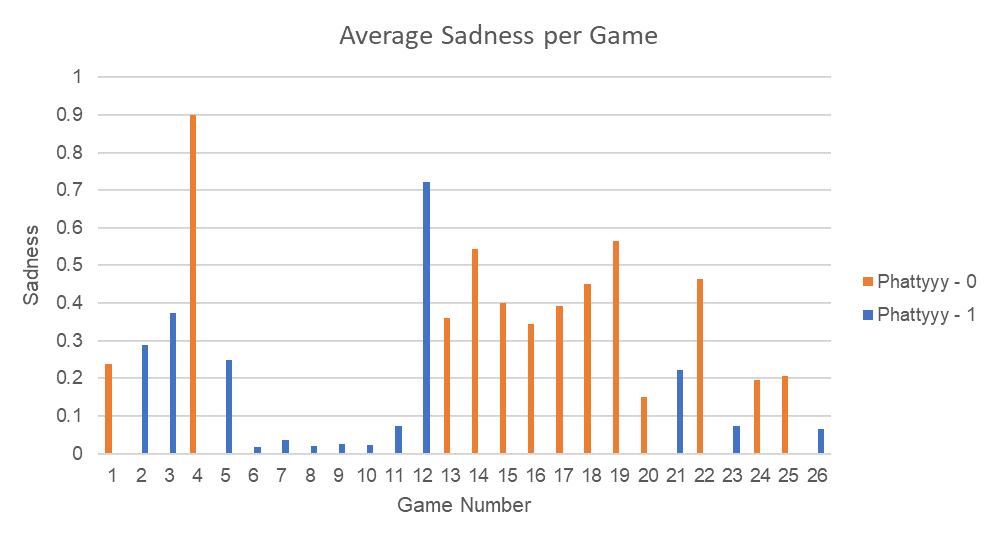

Figure 4. A bar chart displaying the average sadness for each game played by Phattyyy with 0 indicating a loss and 1 indicating a win for the game.

Phattyyy is also shown for displaying some of the same behaviors mentioned as FORKY. We observe that sadness rises as the streamer is winning after the second game and experiences a large spike in sadness during game 4. This leads to a large interval of very low sadness for a 7-game win streak until there is a large spike in sadness and a switch to an 8-game losing streak with sadness remaining relatively high throughout.

Discussion

We have shown here that information pertinent to the emotional expression of Esports players can be extracted from publicly available video game footage using existing video processing technologies and can be expanded especially as the software is refined over time. This creates a number of research opportunities for a broad spectrum of disciplines that may focus on understanding the emotional and cognitive particularities of Esports. Furthermore, even from the limited analyzed data, one can notice certain trends that to the best of our knowledge have not been previously observed. Thus, a constant decrease of engagement has been noticed for extended durations of play. On average, the engagement seems to decrease by an average of 1.51% per game per player for the duration of their gameplay. This is a rather interesting result as one would expect the engagement to remain relatively elevated throughout the session since performance does not necessarily seem to follow that trend. A possible explanation would be that the emotional engagement stays elevated throughout the game, but the facial expression of engagement is ‘dulled’ by fatigue. An alternative explanation would be that as the game progresses, the decision-making efficiency increases, and thus a constant performance can be maintained despite the decrease in engagement. Further studies are needed to elucidate this.

However, we can conclude that the emotional display observed here is a direct result of performance, actions, and outcomes of the gameplay.

With the advent of new emotional expression quantification technologies and the large volume of publicly available game recordings (streaming), it is now possible, using the methodologies described above, to quantify the emotional response at a frequency that allows for continuous monitoring of the emotions for the first time at a very large scale. This is important, because while for traditional sports physical performance monitoring seems to be key to unlocking the potential of players and guiding the training, for video games, continuous emotional and cognitive load monitoring can serve the same purpose. Thus, once enough high-frequency emotional data become available, patterns of emotional response will become available and allow for a more accurate video game-specific performance and wellbeing feedback.

When focusing on specific emotions (joy, sadness, etc.) there is surprisingly little correlation between the actual values of emotion and performance. The dynamics of the emotional expression, however, seem to be extremely rich, and in particular around transition points from a winning streak to a losing streak (or vice-versa), a sudden transition in emotion seems to be noticed across subjects. Although, this could be an inherent mechanism of the public matchmaking within the game, the aforementioned information can be expanded upon within further studies in an attempt to predict game outcomes.

The lack of a controlled environment for the purpose of gathering data was one of the many limitations of this study, although it is not a limitation of the methodology mentioned. iMotions allows for the integration of eye trackers and the EEG which are definite considerations for future research. Another point to note is that the data collected within this study are not entirely reflective of the dynamic within esports competitions. Streamers must interact with their viewers, entertain them, and are often playing with friends which can yield different results than these of a competitive environment.

The intent and scope of this study is to create a proof of concept methodology that would allow for mass analysis of Esports data with minimal investment and minimal computer science knowledge. Further studies can utilize the methodologies described here to answer a variety of specific research questions. The tremendous volume of available videos should allow human behavior researchers, game developers, game streaming broadcasters, Esports players, coaches, and even public health-care professionals to gather insights into the emotional expression of video game players and their impact on performance, wellbeing and health.

References

- Abu-Naser, S., & Al-Bayed, M. (2016). Detecting Health Problems Related to Addiction of Video Game Playing Using an Expert System. World Wide Journal of Multidisciplinary Research and Development, 2, 7–12.

- Affectiva. (2017). Emotion AI 101: All About Emotion Detection and Affectiva’s Emotion Metrics. Retrieved February 18, 2021, from https://blog.affectiva.com/emotion-ai-101-all-about-emotiondetection-and-affectivas-emotion-metrics

- Bowen, L. (2014). Video game play may provide learning, health, social benefits, review finds. Retrieved November 13, 2020, from https://www.apa.org/monitor/2014/02/video-game

- Candela, J., & Jakee, K. (2018). Can ESports Unseat the Sports Industry? Some Preliminary Evidence from the United States. Choregia, 14. https://www.researchgate.net/publication/329840491_Can_ESports_Unseat_the_Sports_Industry_Some_Preliminary_Evidence_from_the_United_States

- Dupré, D., Krumhuber, E. G., Küster, D., & McKeown, G. J. (2020). A performance comparison of eight commercially available automatic classifiers for facial affect recognition. PLOS ONE, 15(4), e0231968. https://doi.org/10.1371/journal.pone.0231968

- Heiden, J. M., Braun, B., Müller, K. W., & Egloff, B. (2019). The Association Between Video Gaming and Psychological Functioning. Frontiers in Psychology, 10. https://doi.org/10.3389/fpsyg.2019.01731

- iMotions Biosensor Software Platform—Unpack Human Behavior. (n.d.). Imotions. Retrieved February 25, 2021, from https://imotions.com/platform/

- Macey, J. (2019). Esports Final Report. Retrieved November 13, 2020, from https://www.academia.edu/44438540/Esports_Final_Report

- McCormick, M (2001).” Is it wrong to play violent video games?”. Ethics and Information Technology 3, 277–287. https://doi.org/10.1023/A:1013802119431

- McDuff, Daniel, et al (2016). “AFFDEX SDK: A Cross-Platform Real-Time Multi-Face Expression Recognition Toolkit.” Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems - CHI EA ’16, ACM Press, 2016, pp. 3723–26. doi:10.1145/2851581.2890247.

- Perrin, A. (2020). 5 facts about Americans and video games. Retrieved November 13, 2020, from https://www.pewresearch.org/fact-tank/2018/09/17/5-facts-about-americans-and-video-games/

- Schaffhauser, B. D.(2019). Esports Joining Olympics in 2024 -. SteamUniverse. Retrieved November 16, 2020, from https://steamuniverse.com/articles/2019/07/30/esports-joining-olympics-in2024.aspx

- Thiel, Ansgar & John, Jannika M. (2018) Is eSport a ‘real’ sport? Reflections on the spread of virtual competitions, European Journal for Sport and Society, 15:4, 311- 315, DOI: 10.1080/16138171.2018.1559019